ROI Case File No.485 'The Hundred Thousand Lines a Human Watched'

The Hundred Thousand Lines a Human Watched

Chapter One: Regulation Changes Always Come Suddenly

"We received tax law revision details last week. The deadline is three months out."

Michael, CTO of TechFlow, spread a development board on the desk. Dozens of sticky notes—blue for test items, red for incomplete, green for complete. The red count was overwhelming.

"The modification itself takes a week," Michael continued. "The problem is testing. Regulation changes have unreadable impact scope. Touch one spot in payroll calculation and it ripples to social insurance calculation, year-end adjustment logic, and printout output. Covering every pattern requires more than 100,000 lines of tests."

"How are tests currently performed?" Claude confirmed.

"Over 90% is manual," Michael answered. "We use Power Automate, but utilization is under 10% of the whole. Only repetitive tasks like volume tests are automated. Fine branches, external service data linkage, UI confirmation—all done by engineers watching screens, typing patterns by hand, verifying results with their eyes."

"How many staff do you throw at each regulation change?" Gemini asked.

"For the most recent modification, seven engineers spent three weeks on testing alone," Michael answered. "During that period, all new feature development stopped. While competitors ship new features, our engineers are stuck on testing."

"Any missed bugs during the test period?" I confirmed.

"Yes," Michael answered. "Three defects surfaced within two weeks of production release after the recent modification. Human testing inevitably misses things. Fatigue makes you check the same pattern repeatedly, or conversely skip patterns."

"What happened when you tried to introduce automation tools?" Claude asked.

"We've examined several times," Michael answered. "But we couldn't choose which tool. AutoTestPro, Selenium family, Playwright, commercial tools—too many options, and we couldn't establish a comparison standard in-house. Undecided, the next regulation change arrives."

"You can't decide because there's no basis for deciding," I said quietly. "That's the domain DESC solves."

Chapter Two: The Four Stages DESC Demands

"This case needs DESC."

Claude wrote four letters on the whiteboard. D, E, S, C.

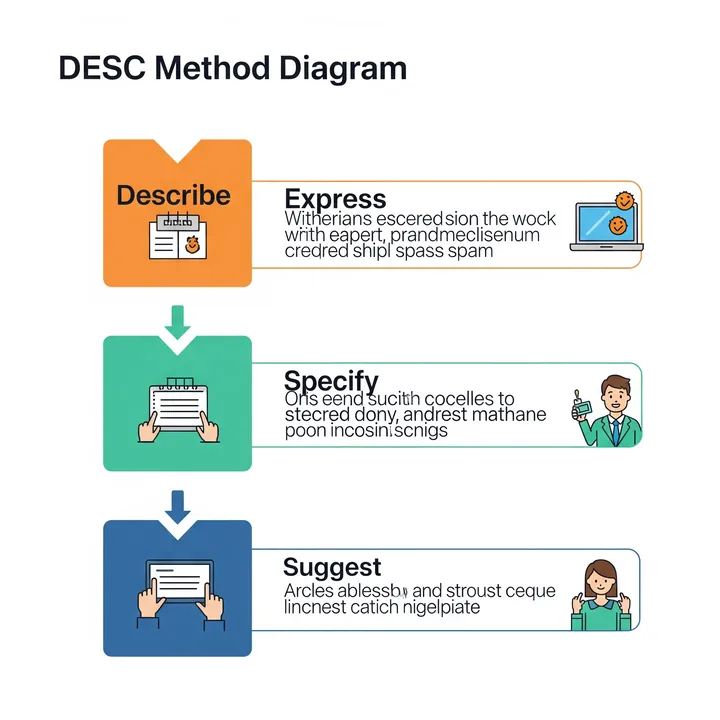

"DESC stands for Describe, Express, Specify, Choose—a framework for organizing a situation and narrowing down choices through four stages," I explained. "Originally a communication technique, it also applies to tool selection. Describe the current state, express your company's requirements, specify necessary conditions, then choose—following this order lets you escape the 'too many options, can't decide' state. TechFlow had been starting from catalog comparison without going through this order."

"Let's measure current cost first," Gemini said, opening ROI Polygraph. Michael's operational logs went in.

"Monthly test cost is out," Gemini read. "With an average engineering headcount of 15, monthly test labor is 720 hours—about 30% of total work time. At ¥5,000/hour, ¥3.6 million monthly. New-feature development stagnation during concentrated testing for regulation changes is estimated at ¥800,000 monthly in opportunity loss. Post-release defect response—production incident recovery, customer response, additional testing—averages ¥400,000 monthly. Total: ¥4.8 million monthly in test-related costs. Annualized: ¥57.6 million."

Michael stayed silent looking at the screen. "We're spending over ¥50 million annually on testing."

"So, let's design with DESC," I continued.

[D—Describe: Describe Current State Concretely]

"First, classify the test targets," Claude said. "Divide Michael's team's tests into four. First, simple repetitive processing—volume testing, already automated. Second, screen UI operations—login, input, button-click type. Third, data linkage—API-mediated external service interaction. Fourth, branch conditions—business logic with results varying by condition."

"Which becomes the automation target?" Michael confirmed.

"Not all—prioritized," I answered. "Most suited for automation are high-frequency, clearly-patterned tests. By this criterion, data linkage tests come first, UI operations second, branch conditions last. Branch conditions are the most complex and the lowest cost-benefit domain for automation."

[E—Express: Express Company Requirements]

"Articulate what TechFlow requires in an automation tool," Gemini continued. "Michael, which characteristic matters most?"

Michael thought briefly. "Post-introduction maintainability. Writing test scripts is hard work, but if maintenance is too hard, we'll end up back on manual testing."

"Maintainability is top priority," Claude noted. "Second, data-linkage testing—API calls and response verification as standard features. Third, coexistence with existing Power Automate assets."

"By expressing, the evaluation axes narrow to three," I continued. "Before narrowing the options, narrow the selection criteria. That's the DESC sequence."

[S—Specify: Specify Necessary Conditions]

"Set a pass/fail line for each of the three axes," Gemini continued. "Maintainability—test scripts must be writable in a code-adjacent format, with Git version control possible. Data linkage—REST API, GraphQL, and authentication token management must be standard-supported. Coexistence—must be able to call Power Automate workflows or pass data back and forth."

"Products that don't meet these pass lines get excluded regardless of other excellence," Claude supplemented. "No perfect product exists. Making only the requirements that TechFlow can't do without the passing criterion narrows the options to effectively three or four products."

[C—Choose: Choose After Narrowing]

"After narrowing to three products, conduct PoC—proof of concept," I continued. "With each product, automate the most painful test case for real. For example, build a representative data-linkage test pattern in all three. Comparing build time, execution time, and maintainability surfaces gaps invisible in catalog comparison."

"Let's simulate with ROI Proposal Generator," Gemini suggested.

- Initial cost: Tool introduction, initial script construction, engineer training totaling ¥4.2 million

- Monthly cost: Tool license ¥180,000/month

- Monthly savings: Test labor reduction—30% reduction in year one for ¥1.08 million, regulation-change opportunity-loss reduction ¥600,000, defect reduction ¥250,000, totaling ¥1.93 million

- Net monthly savings: ¥1.93M − ¥180,000 = ¥1.75M

- Payback period: ¥4.2M ÷ ¥1.75M ≈ 2.4 months

"Payback within 2.5 months," Gemini summarized. "From year two, as automation scope expands, savings stack higher. A state where engineers aren't pulled into testing during regulation changes could put new-feature development pace on par with competitors."

Michael reviewed the figures. "I realize now the reason we couldn't decide despite many options is that we hadn't decided the basis for deciding."

Chapter Three: Deciding Where Not to Automate

"Let me organize the approach," I said at the whiteboard.

"Weeks one and two—four-category test classification and prioritization. Clarify automation targets versus continued-manual targets. Week three—requirement definition and pass/fail line setting. Weeks four and five—narrow to three products and conduct PoC. Week six—product decision and contracting. Weeks seven and eight—initial script construction and training. Week nine onward—phased automation scope expansion."

"Is the choice not to automate branch-condition testing really correct?" Michael confirmed.

"It depends on the stage," Claude answered. "Focus on data linkage and UI operations in year one. Branch conditions become a year-two-plus consideration. Trying to automate everything in the initial phase gets stuck on the complex parts and stops everything. Deciding where not to automate is the condition for successful automation."

Michael closed his notebook. "There are domains humans should watch and domains machines should watch—deciding that should have come before tool selection."

Chapter Four: The Day Engineers Returned to New Features

Eight months later, a report from Michael arrived.

In tool selection, PoC resulted in a candidate not originally in consideration being adopted. "Not the catalog top product but the PoC's fastest and most maintainable product was chosen," Michael wrote. Following the DESC order enabled unexpected choices.

Automation scope started with data-linkage testing. Three months in, about 70% of all API test cases were automated. UI operation tests followed—at five months of operation, 45% of the whole was automated. Test labor went from 720 hours monthly to 500 hours, a reduction of 220 hours, exceeding Michael's initial prediction.

The biggest change appeared during regulation-change response. An August tax-law revision required testing with three engineers over two weeks. What used to take seven engineers three weeks was cut to less than half in both personnel and duration. "During that time, new-feature development didn't stop," Michael wrote.

Post-production-release defects remained at zero for six months of operation. "Machines don't tire. Patterns humans missed, machines don't miss," the report noted.

In the engineer-wide survey, 13 of 15 responded that "irritation with testing work decreased." Twelve said "time to concentrate on new-feature development increased." Michael closed with these words: "We returned engineers to engineer-like work."

The hundred thousand lines a human watched were entrusted to a machine.

"The reason you can't decide with too many options is that the basis for deciding hasn't been decided. DESC's four stages—describe, express, specify, choose—force the work of creating a basis before selection. For TechFlow, the basis was 'maintainability,' 'data linkage,' and 'coexistence.' Once the basis is decided, options narrow naturally. Deciding where not to automate is the precondition for successful automation. The 100,000 lines a human watched were more accurate when a machine watched. Engineers returned to new features."

Related Files

Tools Used

- ROI Polygraph — Visualizing test labor, opportunity loss, and defect response cost

- ROI Proposal Generator — Test automation tool payback simulation