ROI Case File No.474 'Thirty Minutes of Waiting, Then Manual Entry'

Thirty Minutes of Waiting, Then Manual Entry

Chapter 1: The Tool Went In, and They Went Back to Manual

"Two months after implementation, the staff had gone back to manual entry. The AI tool had no effect."

John Smith, Project Manager at TechScribe, opened his laptop as he spoke. The error log for their current AI transcription tool filled the screen. Red alerts occupied roughly a third of the display.

"What kinds of errors are appearing?" I asked.

"Two types: processing that stalls mid-way, and transcriptions that are clearly wrong," John answered. "Processing one hour of audio takes thirty minutes. After thirty minutes of waiting, if the transcription has errors, we still have to fix them by hand. When you add the manual correction time, it's actually faster to just type from scratch—that's the calculation we ended up with."

"How many meeting minutes do you produce per month?" Claude asked.

"About a hundred, centered on condominium association general meetings," John answered. "Average audio is one to one-and-a-half hours per file. That's a hundred files."

"Not all hundred are erroring out, I assume," Gemini said.

"Error rate is around 30%," John answered. "About thirty files have problems. Those thirty files land on staff as extra monthly hours. And since we can't predict which files will error, we have to review all of them."

"The confirmation cost falls on every file," I said.

"Exactly," John continued. "The current contract ends in October. By then we need to have selected a new tool and completed the migration. But I don't want to repeat the same mistake. The question is what criteria to use."

"Selection criteria are today's topic," I answered.

Chapter 2: The Four Perspectives of the BSC

"This case calls for the BSC."

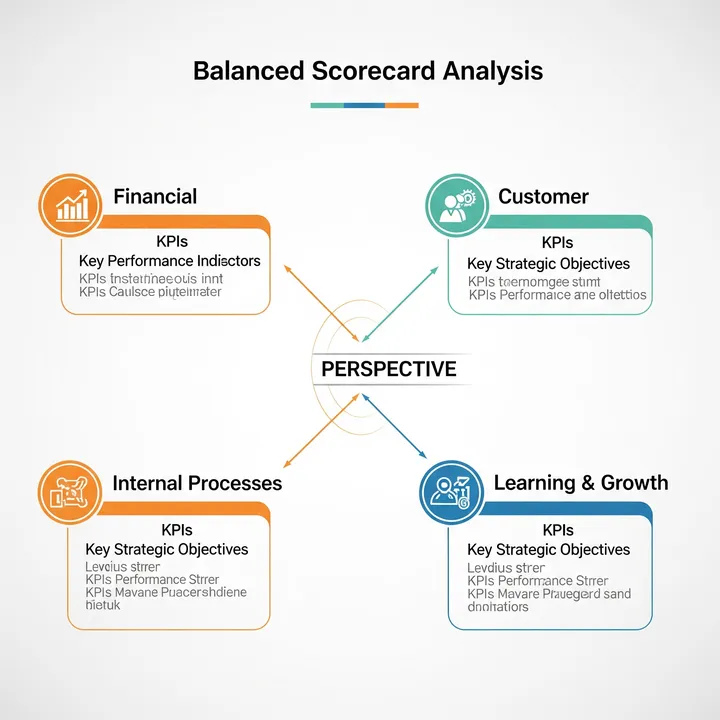

Claude wrote four words on the whiteboard: Financial, Customer, Internal Process, Learning and Growth.

"BSC stands for Balanced Scorecard—a framework for evaluating organizational challenges across four perspectives simultaneously," I explained. "The most common failure in tool selection is deciding from only one perspective. Choosing for speed alone, or price alone, and then discovering a problem from another angle. The BSC is a blueprint for selecting a tool that satisfies all four perspectives at once."

"Let's start by measuring the current cost," Gemini said, opening ROI Polygraph. Work logs and error data from John were entered.

"Monthly minutes work costs are in," Gemini read aloud. "Four staff handling 100 files per month. Processing wait time: 100 hours/month at ¥2,500/hr = ¥250,000. Error correction for 30 files: 60 hours/month = ¥150,000. Full-file review requirement: 40 hours/month = ¥100,000. Total: ¥500,000/month in inefficiencies from the current tool. Annualized: ¥6,000,000."

John said quietly, "The cost of continuing to use it has exceeded the cost of implementing it."

"Now let's design selection criteria through the BSC," I continued.

[Financial Perspective — See Cost in Four Layers]

"When evaluating tools financially, most people only compare monthly fees," Claude said. "But the cost of a tool has four layers: license fees, implementation and setup costs, staff learning costs, and loss from remaining inefficiencies—like the thirty-minute wait we're measuring now. Without adding all four layers, the cheaper tool often turns out to be more expensive."

"What's the financial cost of the thirty-minute wait?" John asked.

"¥250,000 per month," Gemini answered. "If a new tool reduces that to five minutes, this ¥250,000 effectively goes to zero. Even if that tool costs ¥100,000 more per month, it's financially superior."

[Customer Perspective — Whose Satisfaction Are We Measuring?]

"TechScribe's customers are the condominium association administrators," Gemini summarized. "What they need is accurate records delivered on time. How many delivery delays occurred due to tool errors over the past three months?"

"Four delays in three months," John answered.

"Four delays directly affect customer satisfaction," Claude said. "The customer-perspective criterion for tool selection isn't error rate—it's whether delivery delays can be brought to zero. Choose with the goal of improving speed and accuracy in a sequence that connects to the customer."

[Internal Process Perspective — Does It Fit the Current Workflow?]

"Walk me through TechScribe's minutes workflow," I asked John.

"Audio recording → tool transcription → staff review and editing → paste into proprietary format → send," John answered. "The pasting step is manual every time."

"Two criteria from the internal process perspective," Claude said. "First: does the transcription accuracy exceed 95%? Second: can output to our proprietary format be automated? If both are satisfied, staff work becomes review-only."

[Learning and Growth Perspective — Will Staff Keep Using It?]

"Did you ask staff why they stopped using the current tool?" I asked.

"I did," John answered. "The most common response was feeling anxious every time an error appeared. Not knowing which part to trust."

"From a learning and growth perspective, the criterion is how quickly staff can develop trust in the tool," Gemini summarized. "Specifically: does onboarding take one day or less? Is support readily available? Is it clear what to do when an error occurs? A tool that staff can't trust won't be used, regardless of how capable it is."

"Let's run the new tool implementation through ROI Proposal Generator," Gemini proposed.

Costs and savings for a BSC-satisfying tool were laid out.

- Initial cost: Tool migration + setup + staff training — ¥400,000

- Monthly cost: New tool license — ¥80,000/month (¥20,000 increase vs. current)

- Monthly savings: Processing wait eliminated = ¥250,000; 90% error correction reduction = ¥135,000; shift from full review to spot-check = ¥70,000; total = ¥455,000/month

- Net monthly savings: ¥455,000 − ¥20,000 (incremental cost) = ¥435,000/month

- Payback period: ¥400,000 ÷ ¥435,000 = approx. 0.9 months

"Payback in under one month," Gemini summarized. "Six months remain until the October contract end. Starting now allows two months for migration testing and one month for full rollout."

Chapter 3: Choosing Through Four Perspectives

"Let me lay out the selection process," I said, standing at the whiteboard.

"First, narrow the candidates to three vendors based on processing speed and accuracy specs. Then score each of the three against the four BSC perspectives—Financial, Customer, Internal Process, Learning and Growth—each worth 25 points, 100 total. Finally, run a one-month trial with the top scorer and verify the actual numbers."

"What do we look at during the trial?" John asked.

"Three things," Claude answered. "Actual error rate, processing time, and how long staff spend on review. If those three figures are close to the spec sheet, adopt the tool. If there's a significant gap, move to the second-ranked option. October is six months away—there's room to course-correct."

"I think we chose the previous tool from the spec sheet alone," John said. "We skipped the trial."

"A tool reveals things you can only learn by running it," I responded. "The BSC gives you selection criteria; the trial is the experiment that confirms them. With both in place, you won't repeat the same mistake."

Chapter 4: The Day Thirty Minutes Became Three

Five months later, a report arrived from John.

The top BSC-scoring tool was put into trial. One hour of audio processed in an average of 3 minutes and 1 second. Error rate: 1.2%. During the trial period, every staff member said "there's no going back to the old tool," John noted in his report.

One month after full rollout, monthly minutes work costs fell 67% compared to the previous tool. Zero delivery delays. Automated output to the proprietary format was achieved, reducing staff work to content review and approval only.

John's final lines: "When we selected the previous tool, we only looked at cost and performance. The BSC added a question we hadn't thought to ask: will staff actually keep using this? Without that question, we might have selected the same kind of tool again."

The day thirty minutes became three.

"When you choose a tool from only one perspective, another perspective is where the failure comes from. The four perspectives of the BSC—Financial, Customer, Internal Process, Learning and Growth—are each an independent question. Cheap on paper but unused means costs don't fall. High-performing but misaligned with the workflow means manual entry returns. When all four are satisfied simultaneously, the tool gets used. The experience of waiting thirty minutes and going back to manual entry made the next selection precise."

Related Files

Tools Used

- ROI Polygraph — Visualizing minutes work costs and error-handling hours

- ROI Proposal Generator — Simulating ROI on AI transcription tool migration